Augmenting Reinforcement Learning with Behavior Primitives for Diverse Manipulation Tasks

Soroush Nasiriany Huihan Liu Yuke Zhu

The University of Texas at Austin

IEEE International Conference on Robotics and Automation (ICRA), 2022

Paper Code

|

Realistic manipulation tasks require a robot to interact with an environment with a prolonged sequence of motor actions. While deep reinforcement learning methods have recently emerged as a promising paradigm for automating manipulation behaviors, they usually fall short in long-horizon tasks due to the exploration burden. This work introduces Manipulation Primitive-augmented reinforcement Learning (MAPLE), a learning framework that augments standard reinforcement learning algorithms with a pre-defined library of behavior primitives. These behavior primitives are robust functional modules specialized in achieving manipulation goals, such as grasping and pushing. To use these heterogeneous primitives, we develop a hierarchical policy that involves the primitives and instantiates their executions with input parameters. We demonstrate that MAPLE outperforms baseline approaches by a significant margin on a suite of simulated manipulation tasks. We also quantify the compositional structure of the learned behaviors and highlight our method's ability to transfer policies to new task variants and to physical hardware. |

Method Overview

|

Overview of MAPLE. (left) We present a learning framework that augments the robot's atomic motor actions with a library of versatile behavior primitives. (middle) Our method learns to compose these primitives via reinforcement learning. (right) This enables the agent to solve complex long-horizon manipulation tasks. |

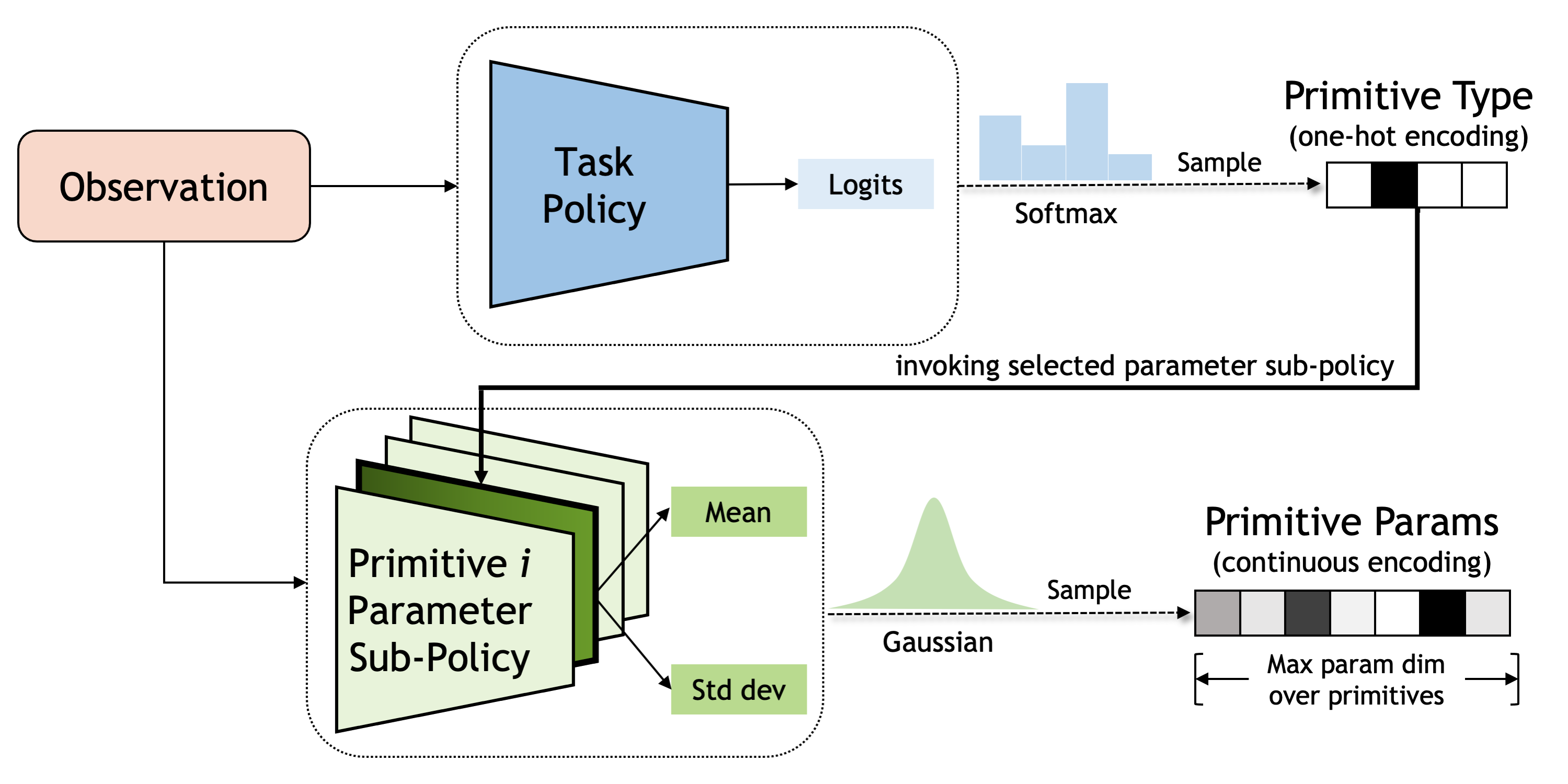

Integrating Heterogeneous Primitives with a Hierarchical Policy

| Our goal is to incorporate a heterogeneous set of primitives that take input parameters of different dimensions, operate at variable temporal lengths, and produce distinct behaviors. To that end we adopt a hierarchical policy, where at the high level a task policy determines the primitive type and at the low level a parameter policy determines the corresponding primitive parameters. Our hierarchical design facilitates modular reasoning, delegating the high-level to focus on which primitive to execute and the low-level to focus on how to instantiate that primitive. |

|

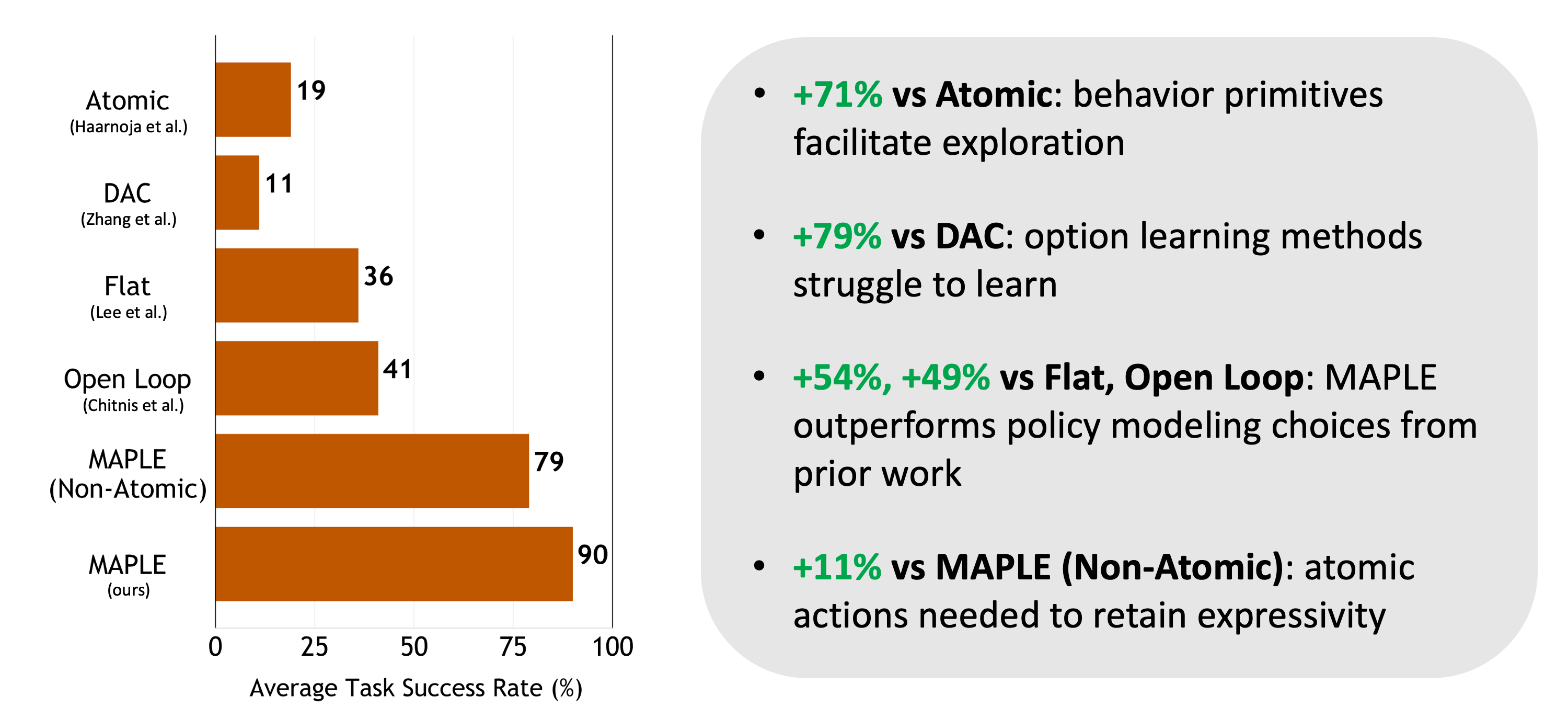

Simulated Environment Evaluation

|

We perform evaluations on eight manipulation tasks. The first six come from the robosuite benchmark, and we designed the last two (cleanup, peg insertion) to test our method in multi-stage, contact-rich tasks. |

|

|

|

|

Model Analysis

|

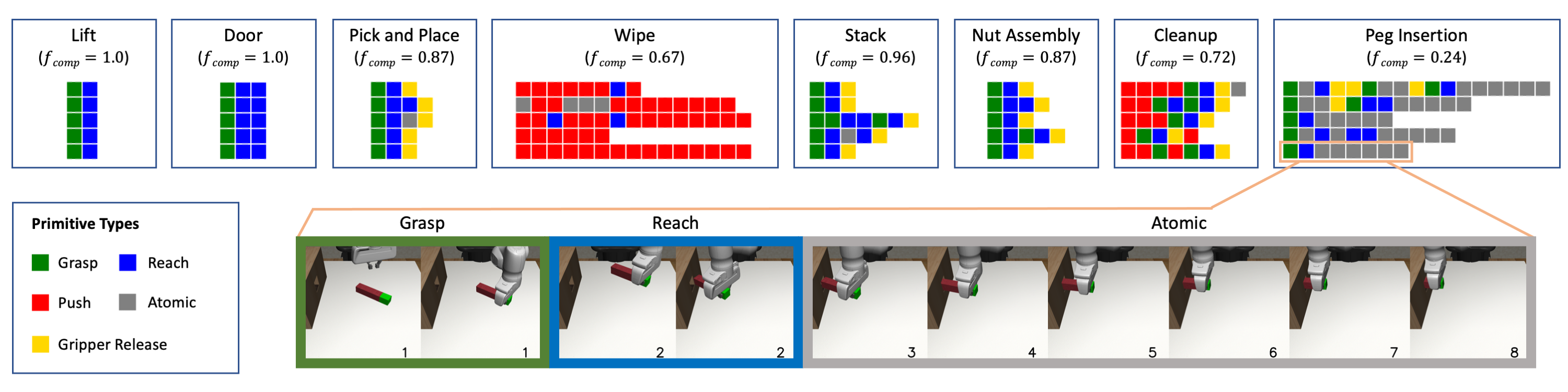

We present an analysis of the task sketches that our method learned for each task.

Each row corresponds to a single sketch progressing temporally from left to right.

We see evidence that the agent unveils compositional task structures by applying temporally extended primitives whenever appropriate and relying on atomic actions otherwise.

For example, for the peg insertion task the agent leverages the grasping primitive to pick up the peg and the reaching primitive to align the peg with the hole in the block, but then it uses atomic actions for the contact-rich insertion phase.

|

Real-World Evaluation

| As our behavior primitives offer high-level action abstractions and encapsulate low-level complexities of motor actuation, our policies can directly transfer to the real world. We trained MAPLE on simulated versions of the stack and cleanup tasks and executed the resulting policies to the real world. Here we show rollouts on the cleanup task (played at 5x). |

Citation

|