Model-Based Runtime Monitoring

with Interactive Imitation Learning

Huihan Liu Shivin Dass Roberto Martín-Martín Yuke Zhu

The University of Texas at Austin

IEEE International Conference on Robotics and Automation (ICRA), 2024

Paper | Code | Bibtex

|

Robot learning methods have recently made great strides but generalization and robustness challenges still hinder their widespread deployment. Failing to detect and address potential failures renders state-of-the-art learning systems not combat-ready for high-stakes tasks. Recent advancements in interactive imitation learning have proposed a promising framework for human-robot teaming, enabling the robots to operate safely and to continually improve their performances through deployment data. Nonetheless, existing methods typically require constant human supervision and preemptive feedback, limiting their usability in realistic domains. In this work, we aim to endow a robot with the ability to monitor and detect errors during runtime task execution. We introduce a model-based runtime monitoring algorithm that learns from deployment data to detect system anomalies and anticipate failures. Unlike prior work that cannot foresee future failures or requires failure experiences for training, our method learns a latent-space dynamics model and a failure classifier that enable our method to simulate future action outcomes, allowing it to detect out-of-distribution and high-risk states preemptively. We train our method within an interactive imitation learning framework, where it continually updates the model from the experiences of the human-robot team collected using trustworthy deployments. Consequently, our method reduces the human workload needed over time while ensuring reliable task execution. We demonstrate that our method outperforms the baselines across system-level and unit-test metrics, with on average 23% and 40% higher success rates in simulation and on physical hardware, respectively. |

Overview

|

We introduce a model-based runtime monitoring algorithm that continuously learns to predict errors from deployment data. We integrate this runtime monitoring algorithm into an interactive imitation learning framework to ensure trustworthy long-term deployment. |

Runtime Monitoring in Operation

|

We consider a human-in-the-loop learning and deployment framework, where a robot performs task deployments with humans available to provide feedback in the form of interventions. Rather than having the human continuously monitor the system and provide feedback whenever possible, our work focuses on developing a runtime monitoring mechanism that queries human feedback only when an error is detected by an error predictor. |

Model Architecture

|

We train a dynamics model, a conditional Variational Autoencoder (cVAE), to predict the next latent state given the current state and action. We also train a policy and a failure classifier head based on the latent state. The dynamics model and policy are trained from the collected experiences. The failure classifier uses the humans intervention states to infer failure states. |

OOD Detection and Failure Detection

|

Our method performs model-based runtime monitoring with two learnable components: a dynamics model and a failure classifier. We first construct a latent space, where image observations are encoded into feature vectors as the latent states. We train a dynamics model that predicts the next latent state conditioned on the current observation and the action. We also train a policy from the same latent space. The latent state space shared between the dynamics model and the policy allows MoMo to simulate counterfactual trajectories and predict different action outcomes.

|

Experiment Results

|

We evalaute on Nut Assembly and Threading in simulation, and Coffee Pod Packing and Gear Assembly in the real world. |

System Performance

|

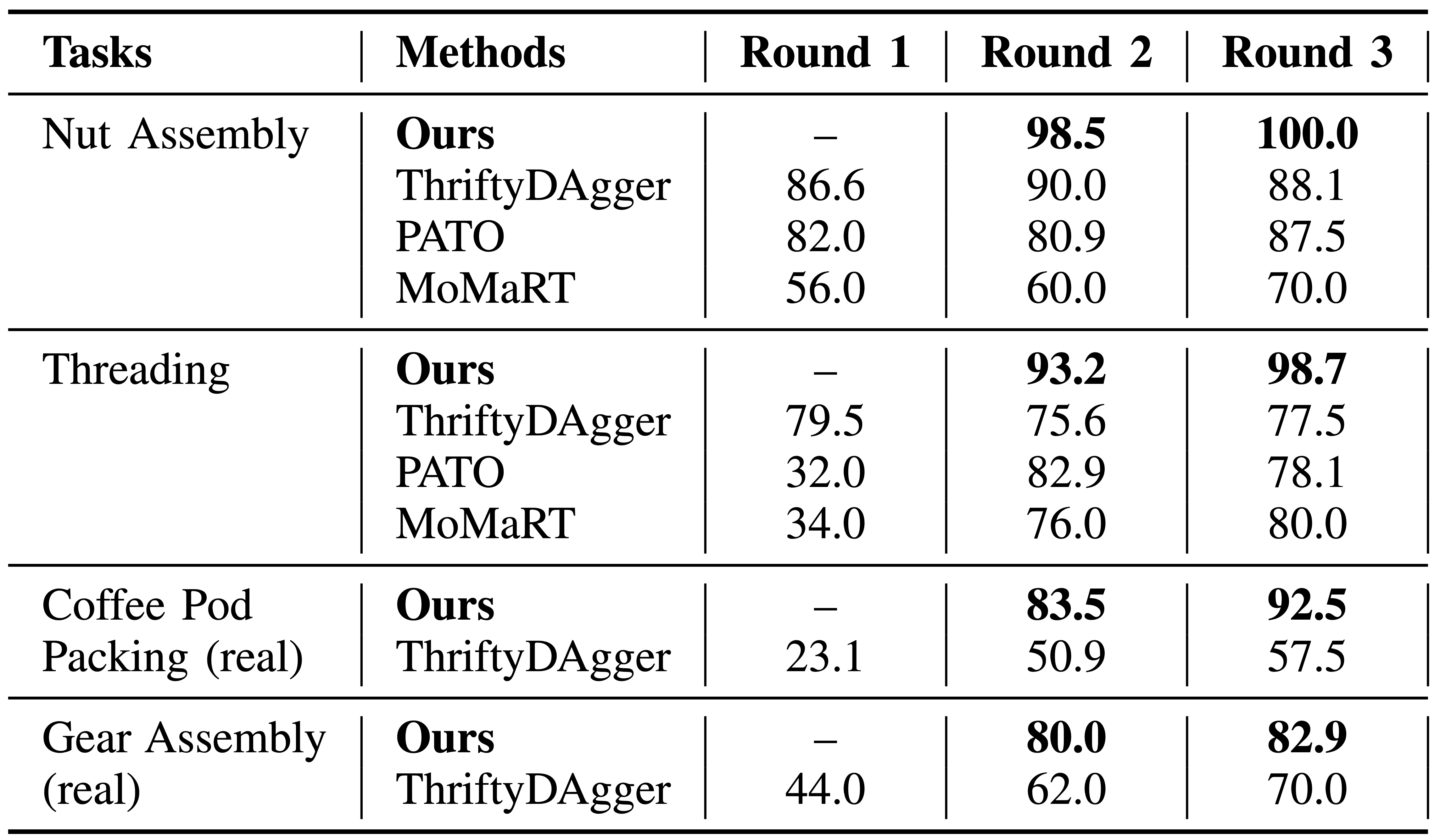

Combined Policy Performance (in Success Rates): Our method consistently outperforms the baseline over the rounds. Note that the Round 1 results of Ours are N/A as it uses full human monitoring for warm-start. |

|

|

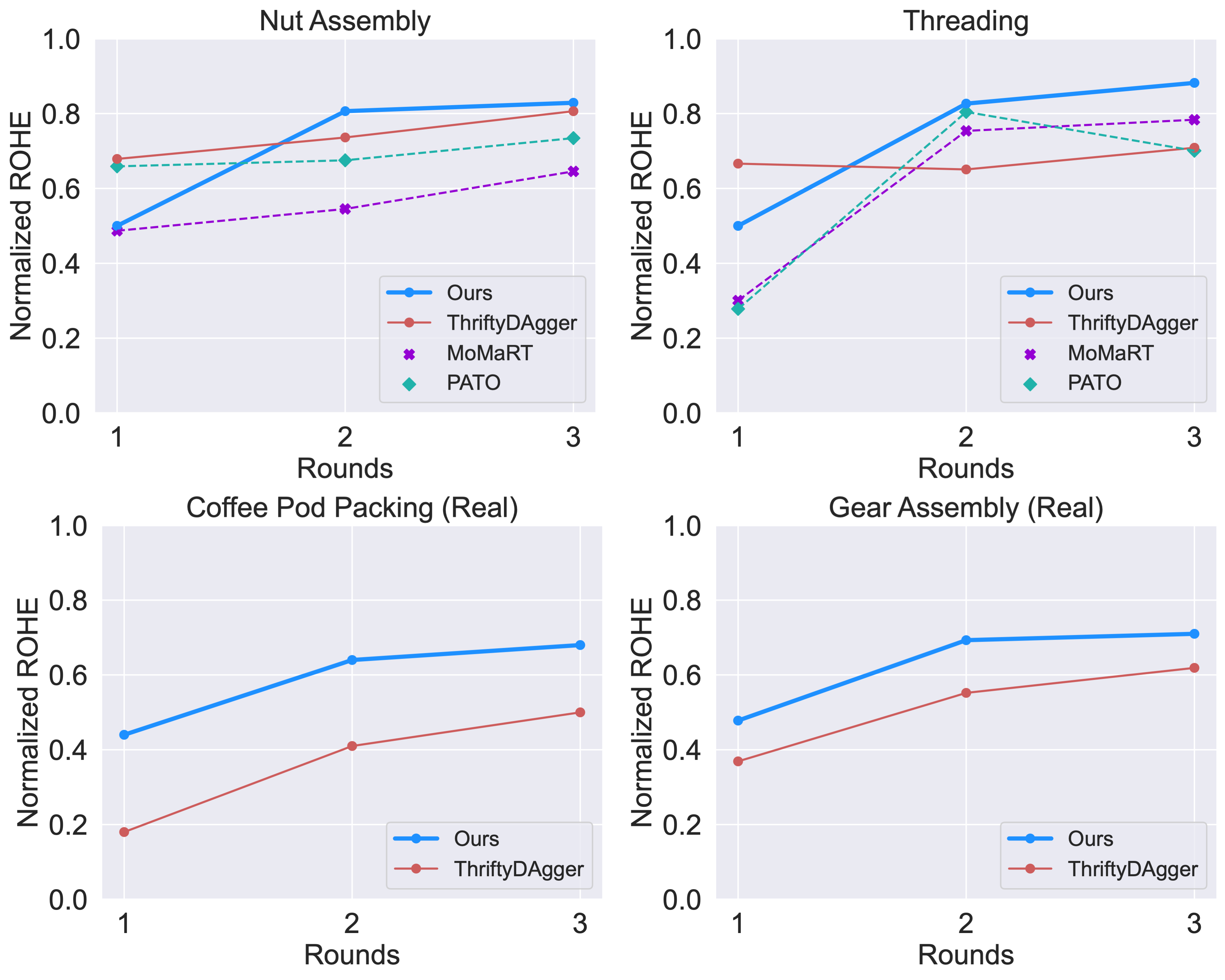

Return of Human Effort (RoHE): Our method generally has lower ROHE in the first round due to the higher human engagement initially; the ROHE becomes better in later rounds as our method becomes more effective at identifying important errors during deployment. |

|

Unit Testing Error Predictors

|

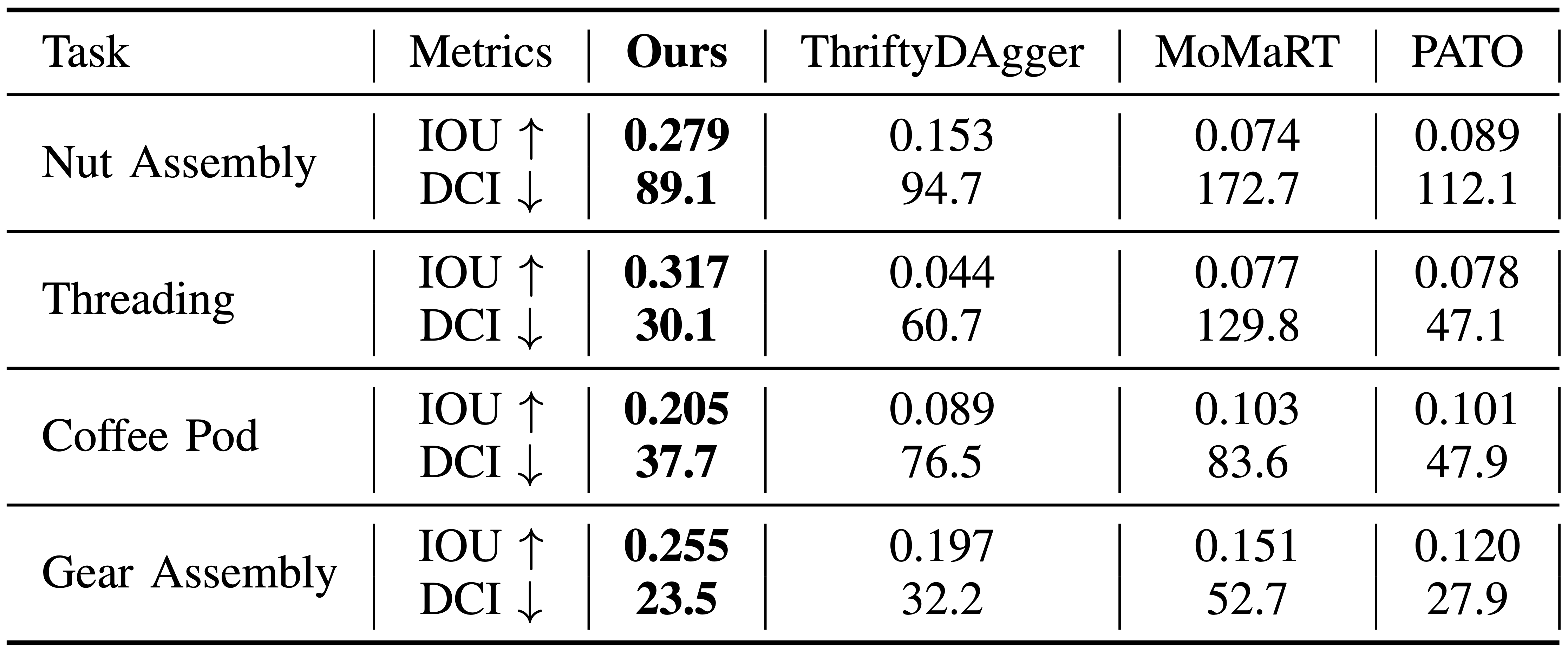

Unit Testing Error Predictors. Our method outperforms other baselines in the two metrics. Better IOU performance indicates higher overlap between detected and human-labeled failures, and lower DCI means that our method's failure events are closer to the true human failure labeling. |

|

Ablations

|

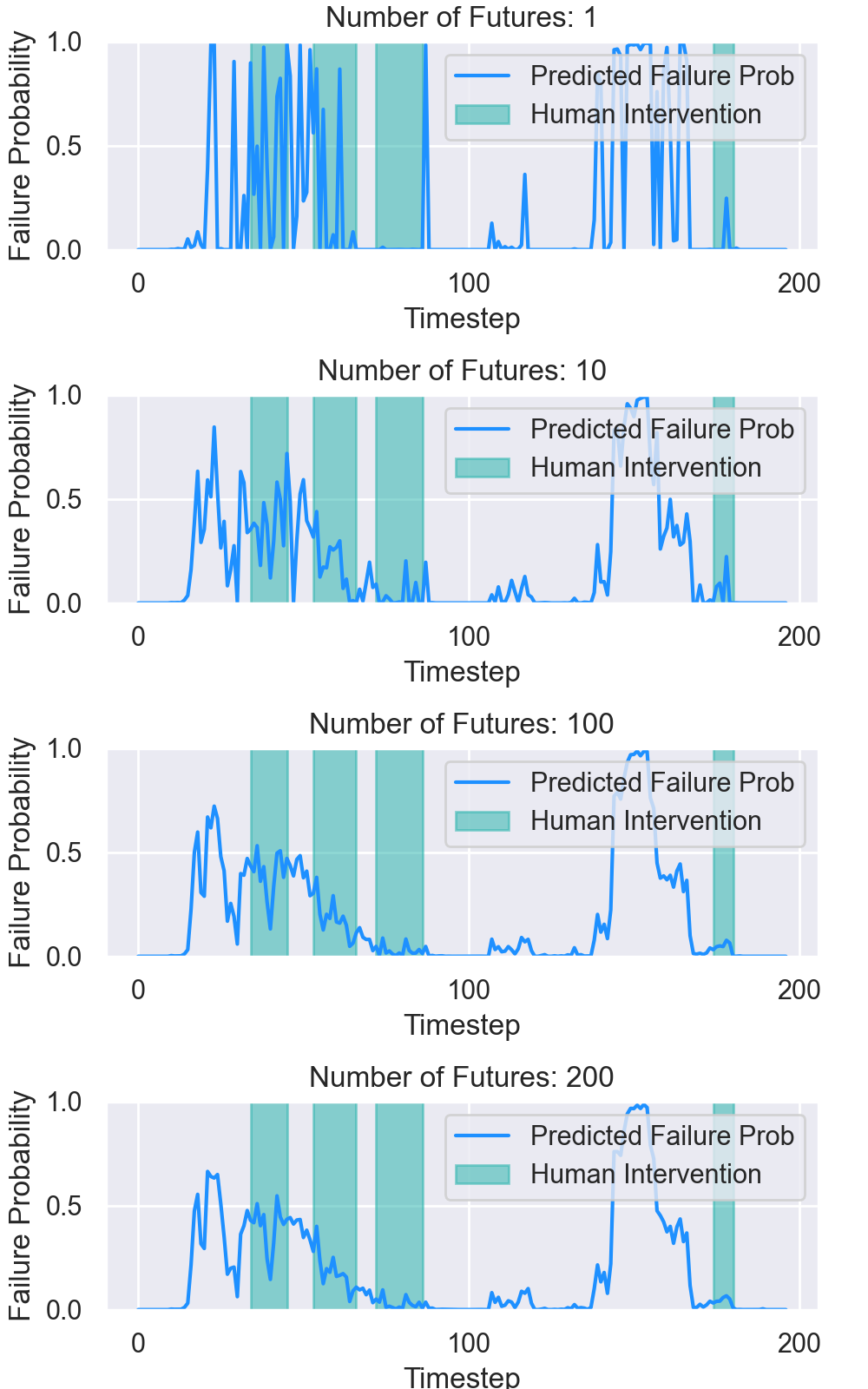

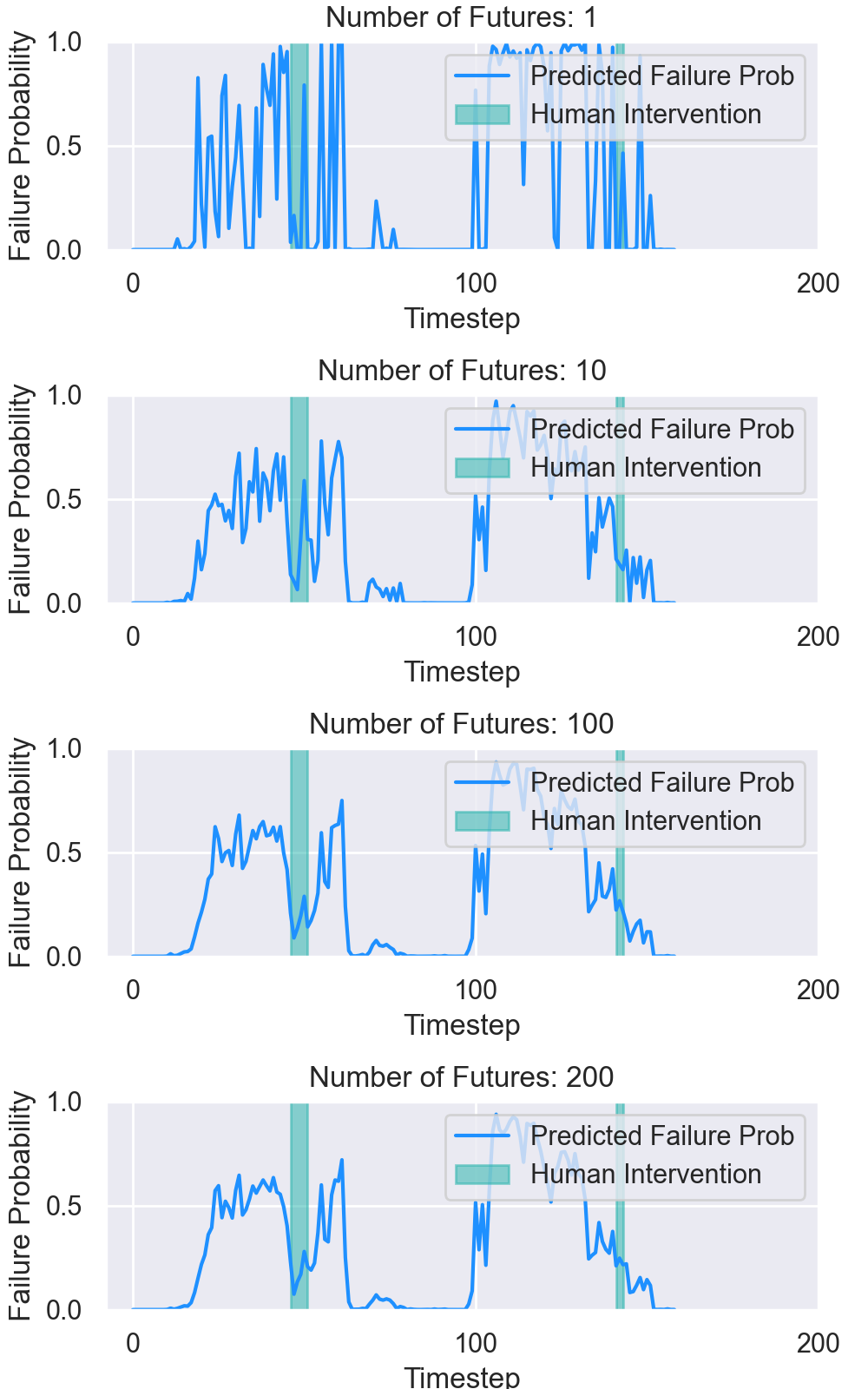

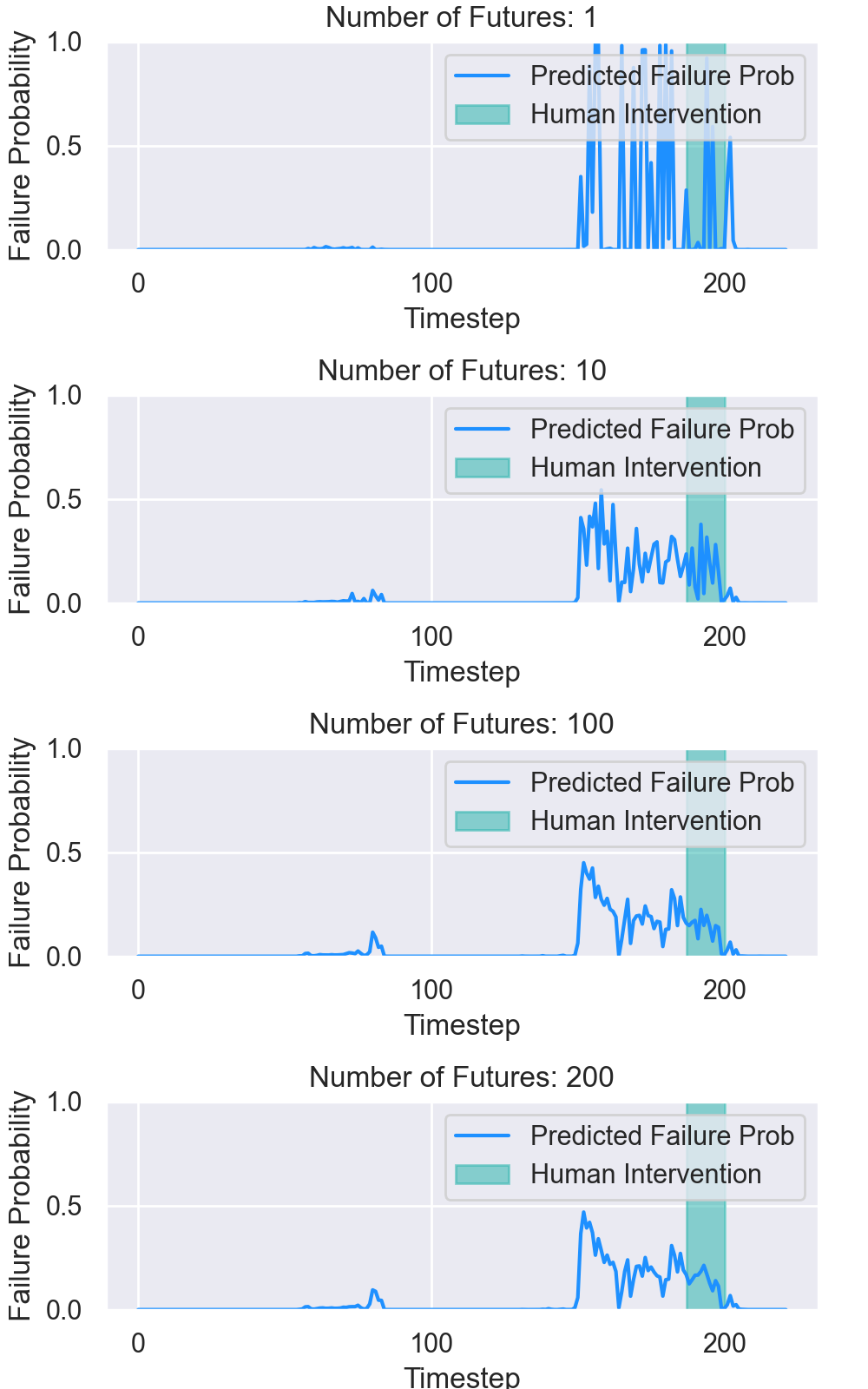

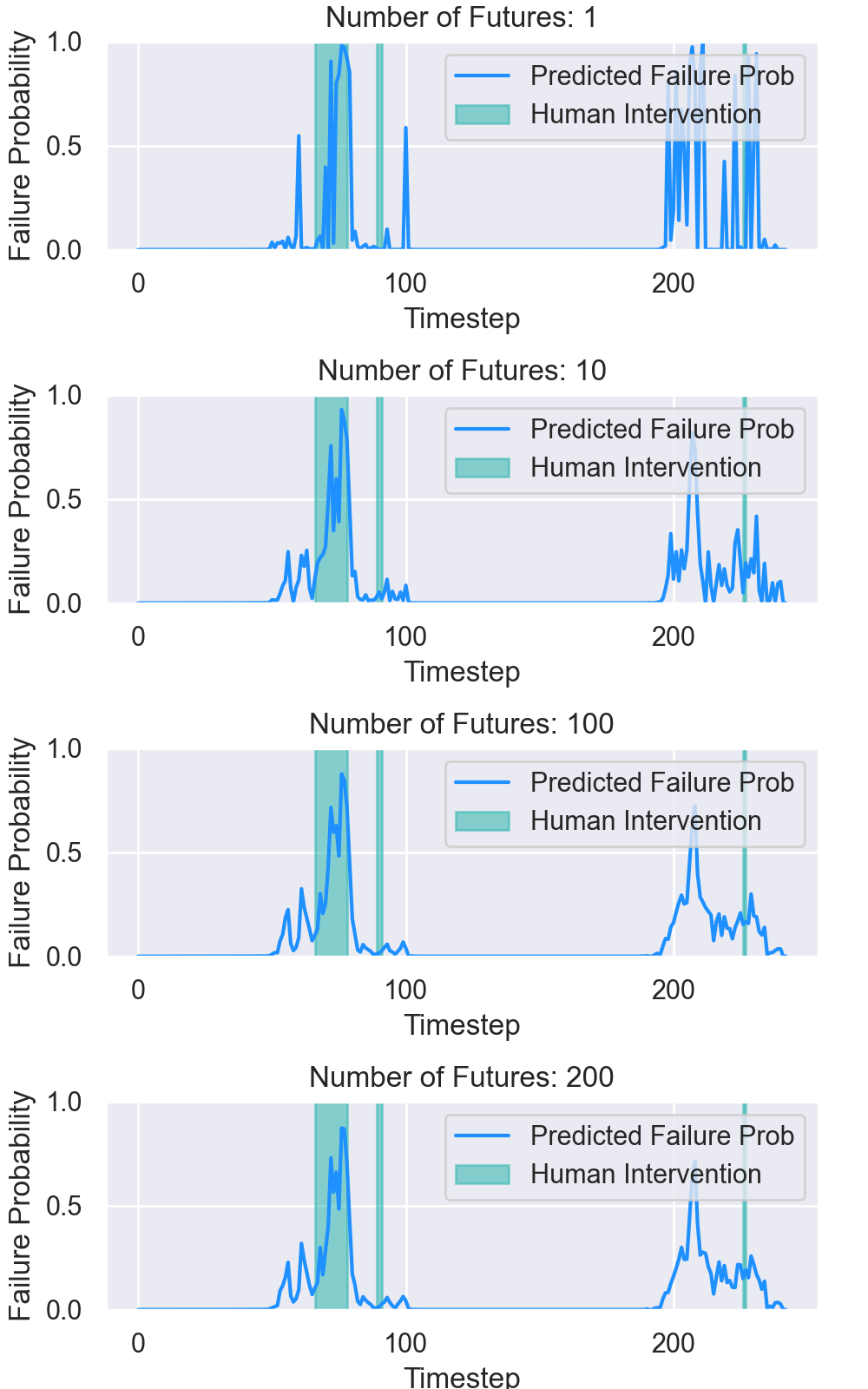

We draw samples from the stochastic latent space of the cVAE model, generating multiple predictions of future states. We evaluate each future independently and then average the error predictor results. Compared with predicting one deterministic future, our method is more robust to prediction noise and produces more temporally-consistent predictions.

|

|

Ablation of Number of Future Predictions: Square |

|

|

|

Ablation of Number of Future Predictions: Threading |

|

|

Citation

|